AI · Distributed Architecture

IntelliChat AI

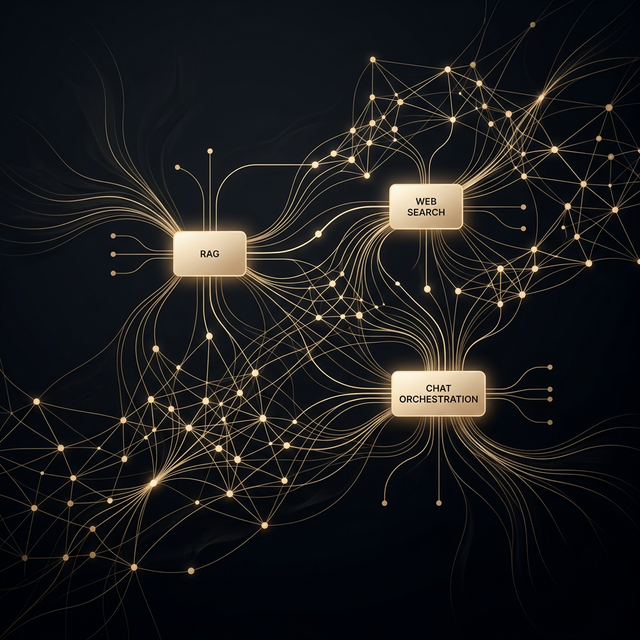

Microservice-based AI orchestration platform with dynamic tool routing across RAG, web retrieval, and conversational services.

The Challenge

Intelligent

prompt routing.

Modern conversational AI systems face a fundamental architectural challenge: a single monolithic model cannot optimally serve every query type. Document-grounded questions demand retrieval-augmented generation, factual queries benefit from real-time web search, and open-ended conversations require different inference parameters entirely.

The challenge was to engineer a system that autonomously classifies user intent in real-time, routes each query to the optimal service, and orchestrates responses across multiple specialized backends — all with sub-second latency.

The Architecture

Multi-service

orchestration.

The system is decomposed into independent microservices, each responsible for a single domain of intelligence:

Intent Classification Layer

Fine-tuned BERT model that analyzes incoming prompts and classifies them into intent categories (RAG, web search, general chat) with confidence scoring. Fallback logic ensures graceful degradation.

RAG Service

Document ingestion with semantic chunking, vector embeddings via FAISS, and retrieval-augmented response generation with source attribution and context window management.

Web Retrieval Service

Real-time web search integration with result parsing, relevance filtering, and LLM-based synthesis to generate factual, sourced responses for time-sensitive queries.

Spring Boot Orchestrator

Central coordinator managing request lifecycle, service health, load balancing, and response aggregation. REST API gateway for frontend consumption.

The Outcome

Autonomous

intelligence.

The resulting platform autonomously routes queries with high classification accuracy. The microservice architecture enables independent scaling of each service — the RAG pipeline handles document-heavy workloads independently of the conversational service, and each component can be updated without system-wide downtime.

The BERT classifier's confidence-based routing eliminates the need for users to manually specify their intent, creating a seamless conversational experience regardless of query type.