LLM · Query Generation

AI Database Manager

Natural language to SQL transpilation via locally hosted Mistral inference with schema-aware prompting and human-in-the-loop execution.

The Challenge

Democratizing

database access.

Non-technical stakeholders often need data from relational databases but lack SQL proficiency. Existing NL-to-SQL solutions rely on cloud APIs, introducing latency, cost, and data privacy concerns. The challenge was to engineer a system that translates natural language queries into valid, executable SQL — running entirely on local infrastructure with no external API dependencies.

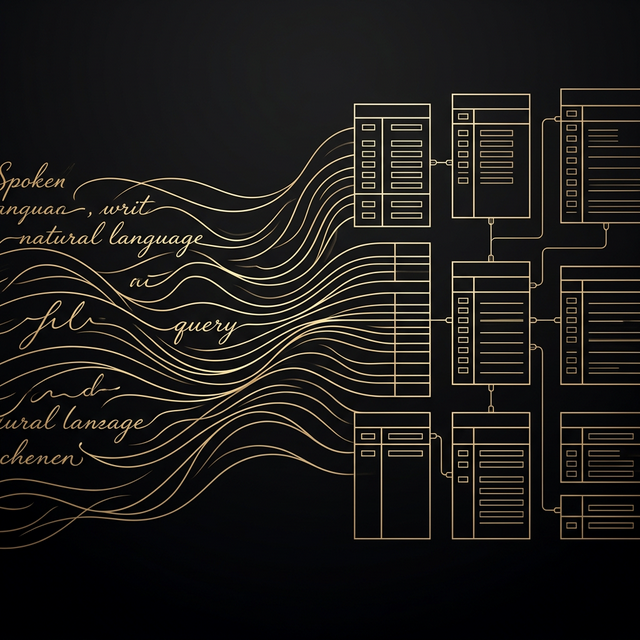

The Architecture

Schema-aware

transpilation.

Schema Introspection

Automatic extraction of table names, column types, constraints, and relationships from the target database. This schema context is injected into the LLM prompt to ensure structurally valid SQL generation.

Local LLM Inference (Ollama + Mistral)

Mistral model hosted locally via Ollama. Zero cloud dependency. Natural language queries are transpiled into SQL through schema-constrained few-shot prompting with syntactic validation.

SQL Validation & Safety

Generated SQL is parsed and validated before execution. Destructive operations (DROP, DELETE, TRUNCATE) require explicit confirmation. Snapshot-based rollback enables safe experimentation.

Human-in-the-Loop Execution

Users review generated SQL before execution. Results are displayed in formatted tables. Query history enables refinement and iterative exploration of the database.

The Outcome

Local-first

data intelligence.

The system enables natural language database interaction with zero cloud dependency. Schema-aware prompting ensures structurally valid SQL, while the human-in-the-loop design prevents unintended data mutations. The entire pipeline runs on commodity hardware via Ollama, making it accessible for privacy-sensitive environments.